Professional Distillation Equipment: Key Features and Selection Tips for Expert Results

2026-05-07

Achieving expert-level results in distillation starts with the right equipment—precision, durability, and efficiency are non-negotiable. But with countless options on the market, how do you choose the setup that truly elevates your craft? At DYE, we understand that every detail matters, from heating uniformity to material purity. In this guide, we’ll walk you through the essential features and insider selection tips that separate professional-grade stills from the rest, helping you make an informed decision without the guesswork.

Precision Temperature Control: The Silent Architect of Flavor

Every degree matters when coaxing delicate notes from tea leaves or coffee grounds. A swing of just two or three degrees can turn a bright, floral cup into something bitter and hollow. Precision temperature control works behind the scenes, holding the water at that exact sweet spot where aromatic compounds bloom without scalding. It’s not just about hitting a number; it’s about giving the ingredients the steady, gentle heat they need to unfold fully.

Think of it as a slow, deliberate conversation rather than a rushed monologue. When the temperature is locked in, extraction becomes even and intentional. Oils and acids release in harmony, building layers that a impatient kettle simply can’t achieve. This quiet consistency is what separates a memorable sip from a forgettable one—a nuance often felt rather than articulated.

In the end, temperature control is the invisible hand guiding flavor development. It doesn’t shout for attention, but its absence is immediately noticeable. Whether you’re chasing the subtle sweetness of a white tea or the rich body of a dark roast, the right heat makes the difference between something merely warm and something truly alive.

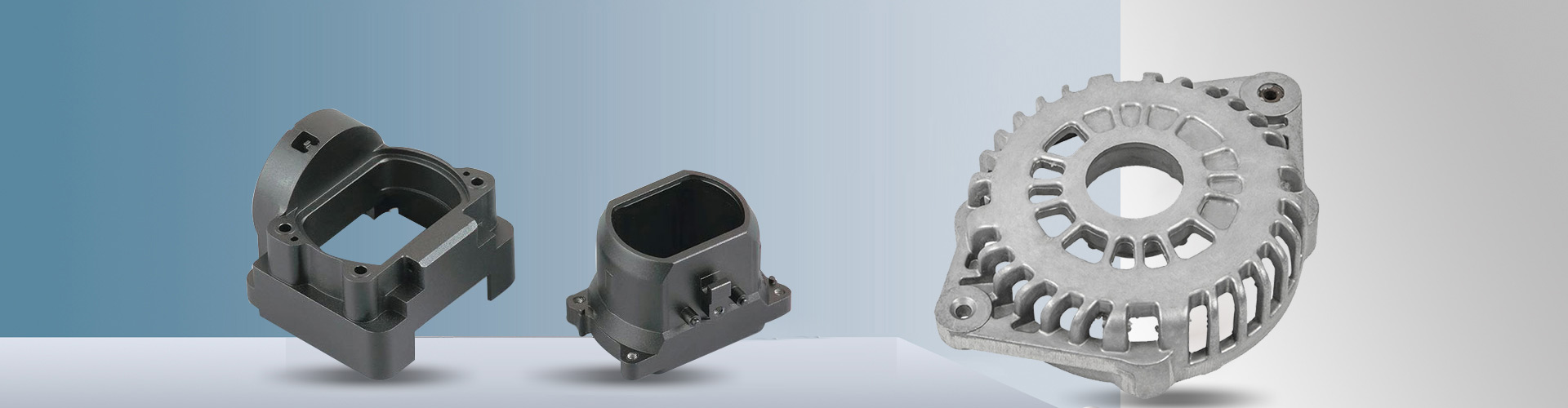

Material Integrity: Why Your Column's Composition Defines the Spirit

The soul of a column isn't in its silhouette alone—it's woven into the very matter from which it rises. Stone, with its ancient weight and cool endurance, speaks of permanence and earthly gravity. Marble, veined with the passage of millennia, carries a luminous authority that seems to breathe with the space it commands. Concrete, in its raw modernity, offers a blank canvas of brutal honesty, while timber columns bring warmth, a memory of the forest, and an organic dialogue with time as they age and settle. Each material whispers a different story, and that story becomes inseparable from the space's atmosphere, shaping how we feel within it long before we consciously notice the form.

Beyond aesthetics, material integrity dictates a column's structural truth. A fluted marble pillar isn't merely ornamental; the fluting itself lightens the visual mass while enhancing the stone's innate compressive strength. Cast iron, with its ability to be moulded into delicate, almost botanical forms, allowed the Industrial Age to marry factory precision with organic fancy—strength disguised as ornament. Modern steel columns, slender and unadorned, speak of tensile potency and an honest expression of load-bearing logic. The composition determines not only what the column can hold but how it communicates that capacity: a stone column reassures with bulk, while steel astonishes with its minimalism, and both achieve a deep-seated trust through the raw, unapologetic nature of their substance.

In the end, a column's material is its spirit made manifest, a covenant between the artistic vision and the physical world. It reminds us that architecture is never just about space—it's about substance. Choosing granite over plaster, oak over aluminium, is a decision that ripples through the sensory and symbolic layers of an environment. It can root a room to the earth or lift it toward the ethereal; it can evoke the stoic confidence of a temple or the innovating pulse of a contemporary gallery. The integrity of the column, its refusal to pretend to be anything other than what it is, becomes the quiet anchor of a building's character—an embodied ethos supporting not just the roof but the entire emotional weight of the place.

Sizing Without the Guesswork: Matching Capacity to Ambition

Many teams fall into the trap of sizing their infrastructure based on rough estimates or, worse, a fear of falling short. They add headroom they'll never use, just to be safe. But there's a better way. Real sizing starts with a brutally honest look at what your ambition actually demands—not the padded version you'd like to present. It means dissecting current workloads, understanding peak patterns, and projecting growth that's rooted in real data, not wishful thinking. When you align capacity with genuine need, you stop paying for idle resources and start building a foundation that flexes with you, not against you.

The trick is to treat capacity not as a fixed pool but as a set of dials you can adjust as ambitions evolve. Too often, organizations lock themselves into multi-year contracts or oversized clusters because no one wanted to do the math upfront. Instead, break down your goals into measurable units—transactions per second, concurrent users, data ingest rates—and map them to the resources they truly consume. Then add a slim buffer, just enough to absorb surprises, and commit to revisiting those numbers quarterly. This approach turns sizing from a one-time gamble into a continuous conversation, where every upgrade or scale-down is a deliberate, informed choice.

Perhaps the biggest shift is cultural: moving away from the idea that 'bigger is always better.' Ambition shouldn't be measured in terabytes reserved but in outcomes delivered. Teams that master sizing without guesswork celebrate efficiency as much as expansion. They instrument their systems to surface real usage, set thresholds that trigger action, and aren't afraid to downsize when the data calls for it. The result is a leaner operation that can pivot quickly, take on new challenges, and actually deliver on its ambitions—without the drag of overprovisioning or the risk of under-delivering.

Hidden Design Flaws That Sabotage Consistency

It’s rarely the obvious mistakes that undo a design system. The real culprits are the subtle, almost invisible flaws that accumulate over time. A component might look identical in the library, but its behavior shifts ever so slightly between contexts—spacing rules that break under specific screen widths, or hover states that only work on desktop. These tiny inconsistencies erode user trust quietly, often going unnoticed until someone on the team finally asks why the same button feels sluggish in one part of the product but snappy elsewhere.

Another overlooked pitfall lives in the blurry boundary between design tools and live code. Designers polish every pixel in a Figma file where constraints don’t fully mirror reality, and developers interpret those specs through their own technical lens. The result? A text field that gracefully truncates in the mockup but overflows in production, or a card layout that adapts beautifully to a fixed viewport but unravels when real user data varies in length. These mismatches aren’t flagged by typical QA because they don’t break functionality outright—they just make the experience feel disjointed, like walking through a house where every door needs a slightly different amount of force to open.

The most insidious design flaws, however, aren’t visual at all. They hide in interaction patterns that lack coherent logic. Maybe the product uses three different kinds of “back” navigation depending on how you arrived at a screen, or confirmation dialogues that look identical but require different gestures to dismiss. Users won’t articulate why they feel uneasy; they’ll simply perceive the tool as clumsy or unpredictable. This fragmentation often stems from teams iterating quickly on features without a shared interaction vocabulary, creating a silent tax on usability that’s hard to measure but impossible to ignore once you start paying attention.

Advanced Techniques the Manual Won't Teach You

Sometimes the real magic lies in understanding the hardware well enough to bypass the prescribed software limits. For instance, many microcontrollers have undocumented debug interfaces or backdoors left by the engineers during prototyping. Learning to probe with a logic analyzer and deciphering test points can let you enable features the consumer interface never intended to expose. The manual will tell you the safe operating range, but real tinkering is about finding those grey areas where components still respond reliably but unlock new capabilities.

Another trick is mastering firmware manipulation without recompiling. By directly editing the binary image, you can alter calibration tables, sensor thresholds, or even patch conditional jumps to change behavior. This isn't about hacking into secured systems; it's about customizing your own devices beyond what the configuration menus allow. A hex editor and a lot of patience become your best friends here, especially when combined with incremental backups so you never lose a working state.

Finally, stacking unconventional component combinations rarely appears in official documentation. Running a motor driver IC way below its intended voltage to use it as a linear actuator, or repurposing an audio amplifier IC as a piezo transducer driver with a custom feedback loop — these are the patterns you develop through experimentation. Shared in niche forums rather than instruction books, they turn ordinary off‑the‑shelf parts into solutions no datasheet ever predicted.

When Automation Becomes a Necessity, Not a Luxury

A decade ago, automating a single workflow felt like an indulgence—something reserved for well-funded teams with time to experiment. Today, it’s the floor you stand on to stay operational. Supply chain disruptions, labor shortages, and the sheer volume of data have pushed businesses past the point where manual processes can keep up. If you’re still treating automation as a nice-to-have, you’re already falling behind.

This shift isn’t limited to factory floors or IT departments. Plumbers use scheduling bots to avoid missed appointments; coffee shops lean on inventory systems that reorder beans before they run out. The line between “essential” and “optional” blurred when customer expectations outpaced human bandwidth. Nobody cares how the work gets done—they just expect it to be done, fast and without error.

The real wake-up call comes from resilience. When a sudden market swing or staffing hiccup hits, automated systems don’t call in sick or misread a spreadsheet. They absorb the shock. That’s why adoption has rocketed past early-adopter circles into the heart of small businesses and solo operators. Automation isn’t a luxury you add during a good quarter—it’s the backbone you rely on when things get rough.

FAQ

Look for precise temperature control, high-quality column packing materials, and efficient condensation systems. A reflux ratio controller can fine-tune separation, while borosilicate glass or stainless steel construction prevents contamination. Adequate insulation also helps maintain consistent temperatures throughout the process.

Start by estimating your typical batch volume and frequency. For research labs, a 1-5 liter system usually suffices; pilot plants may need 20-50 liters. Consider future scale-up potential and whether you'll run continuous vs. batch processes. Oversizing can waste energy and solvent, while undersized equipment limits throughput.

Automated fraction collectors save time and reduce errors, vacuum jacketed columns minimize heat loss, and self-cleaning features simplify maintenance. Pressure regulation systems for vacuum distillation are invaluable for heat-sensitive compounds. Also, quick-connect fittings speed up setup and cleaning rounds.

Glass offers visibility and chemical inertness but is fragile; borosilicate resists thermal shock. Stainless steel is durable and ideal for high-pressure or large-scale operations but can react with some acids. PTFE components provide excellent chemical resistance for seals and gaskets. Match materials to your specific solvent and temperature profiles to avoid corrosion or contamination.

Over-temperature cutoffs, pressure relief valves, and spark-proof components are essential. Look for systems with automatic shutoff if cooling water fails. Fume extraction attachments and leak detection sensors add extra layers of protection, especially when distilling volatile or toxic substances.

Insulate columns and boilers to reduce heat loss, use heat recovery systems like feed-effluent heat exchangers, and optimize reflux ratios. Variable speed drives on vacuum pumps and efficient condenser designs (e.g., coil vs. shell-and-tube) can also lower energy consumption. Regular maintenance keeps the system at peak efficiency.

Short path is best for heat-sensitive, high-boiling compounds under vacuum, offering faster separations with minimal degradation. Fractional distillation excels for mixtures with close boiling points requiring multiple theoretical plates. Consider the purity needed and the physical properties of your components. Many labs benefit from having both setups available.

Conclusion

Seasoned distillers know that the still's true worth lies in the nuance of its design, not the shine of its copper. Precision temperature control is the quiet force shaping each fraction, allowing you to pull hearts with surgical accuracy instead of settling for blurred cuts. Your column's composition matters just as much—copper catalyzes sulfur removal, while inert stainless holds its ground for neutral work, but a mismatched alloy can ghost unwanted flavors into an otherwise flawless run. Sizing the rig is never a simple scale-up; an oversized boiler can strangle efficiency on small batch days, and an undersized one can choke your growth. Then there are the hidden gremlins—poorly placed thermowells, restrictive vapor paths, or sloppy condenser drains—that erode consistency batch by batch. Investing time to understand these elements before a single drop is collected separates a reliable setup from a lingering frustration.

What truly elevates output goes beyond the spec sheet. Mastering advanced techniques means learning to read the mash and adjust reflux ratios dynamically, often overriding the textbook numbers. It's about trusting your senses at the spout rather than blindly relying on digital readouts that may lag or mislead. Automation has its place, but only when it complements your judgment—automated cuts are a luxury until they become a necessity for scaling up safely and repeatably. The best results come from a partnership between you and the machine, where the equipment handles the mundane while you focus on the craft's subtle decisions. That shared intelligence, born from thoughtful selection and hands-on experience, is what ultimately produces spirits with clarity, depth, and a signature that's unmistakably yours.

Contact Us

Contact Person: Ada Xu

Email: [email protected]

Tel/WhatsApp: 0577-86806088

Website: https://www.dayuwz.com/